Of course. Here is the feature article, written in the persona of Dr. Aris Thorne.

*

Let’s travel forward for a moment. The date is October 16, 2025. On the surface, the digital world is humming along, a symphony of perfectly executed code. Your news feed is flawlessly curated, your ads know what you want before you do, and the internet feels like a space built just for you. But on Wall Street, the human heart is in revolt.

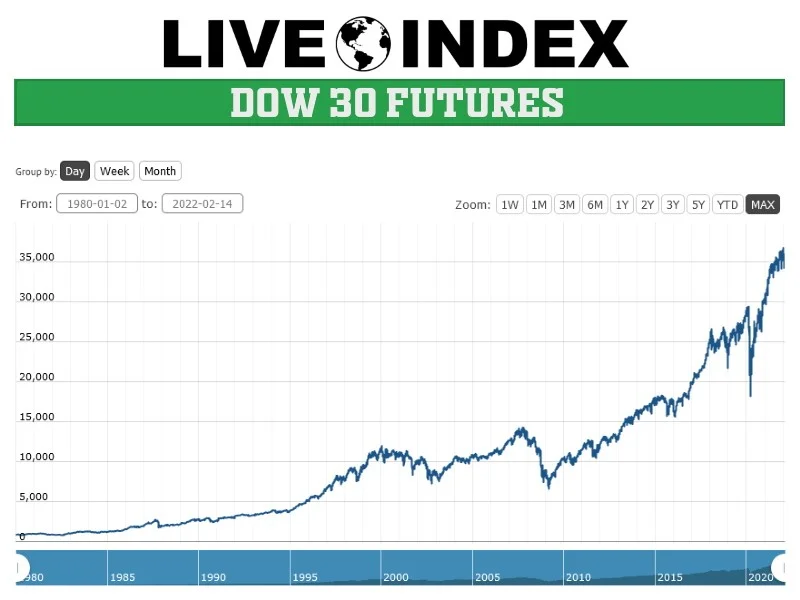

The market is bleeding. The Dow is down 300 points, the VIX—Wall Street’s so-called “fear index”—has spiked to its highest level in months, and investors are fleeing to the safety of bonds. Why? Because of a rumor, a worry, a ghost in the financial machine. A mid-sized bank, Zions Bancorporation, discloses a $50 million charge on a few bad loans, and suddenly, Jamie Dimon’s chilling words from a few days prior echo through the trading floors: “When you see one cockroach, there are probably more.”

That one phrase, so primal and visceral, is all it takes. Fear, a deeply human and irrational emotion, goes viral. Billions of dollars in value are wiped out not because of a systemic failure of code, but because of a failure of confidence.

And I have to be honest, when I see a moment like this, I’m struck by the breathtaking paradox of our age. We are building the most sophisticated, data-driven, and predictive digital infrastructure in human history, yet we remain completely at the mercy of its oldest and most unpredictable operating system: the human spirit.

The Perfect Map of an Imperfect World

Right now, as you read this, an invisible architecture is working tirelessly to understand you. It’s detailed in documents most of us never read, like the NBCUniversal Cookie Notice—a fascinating blueprint for the digital self. It describes a world of first-party and third-party cookies, of web beacons, embedded scripts, and cross-device tracking. It details how information is stored, accessed, and measured to personalize your experience, select your content, and deliver your ads.

This invisible network is constantly learning, adapting, and trying to build a perfect digital model of you, which is an absolutely staggering feat of engineering when you stop and think about the sheer scale of it all. It uses things like ETags and software development kits—basically, think of them as digital fingerprints and specialized tools that apps use to talk to each other—to create a persistent identity for you across your laptop, your phone, and even your smart TV. The goal is a seamless, frictionless existence.

But here’s the disconnect. This entire system, for all its brilliance, is fundamentally built to measure behavior, not intent. It tracks clicks, not courage. It logs page views, not passion. It measures time-on-site, not turmoil. What does a “Personalization Cookie” know of the gut-wrenching fear that causes a trader to sell off a life’s savings? How can an “Ad Selection Cookie” possibly comprehend the hope that makes an entrepreneur pour everything into a new venture against all odds?

It’s like meticulously mapping every single ant in a colony while completely missing the fact that a flood is about to wash the whole thing away. We have the data. We have the analytics. But do we have the wisdom to understand what it all means? Are we building a digital world that’s so focused on the “what” that it has completely lost the plot on the “why”?

The Digital Shrug

Every so often, this perfect, logical system collides with the messy reality of being human, and the result is a quiet, frustrating error message: “Access to this page has been denied because we believe you are using automation tools to browse the website.”

When I see a message like Access to this page has been denied., I don’t just see a technical glitch. I see a metaphor. The system detects a pattern it wasn’t programmed to understand—a series of actions that don’t fit the neat little box of expected human behavior—and its only solution is to shut the door. It’s a digital shrug. The machine, faced with an anomaly, simply says, “I don’t get it. You must be a bot.”

This is the fundamental crisis of our data-driven age. We’ve built these incredible engines of logic, but we’ve forgotten to teach them empathy. We’ve created a global network that can process trillions of data points in a nanosecond but can be brought to its knees by a single, powerful emotion like the fear rippling through the market on that October afternoon.

This isn't a critique of the technology itself. This is the kind of breakthrough that reminds me why I got into this field in the first place. The ability to connect and understand the world on this scale is a miracle. But it feels like we're in the earliest days of the printing press. We’ve invented the machine to print the pages, but we haven't written the great novels or the constitutions yet. We have the tool, but we’re still figuring out its soul.

The ethical responsibility on us—the builders, the dreamers, the users—is immense. As we weave this technology deeper into the fabric of our lives, from our finances to our social connections, we have to ask ourselves: are we building a world that serves our humanity, or are we sanding down our humanity to fit the logic of the machine?

The Real Upgrade We Need

For all the talk about faster processors and bigger data sets, the next great technological leap won’t be measured in gigahertz or petabytes. It will be measured in understanding. The next paradigm shift isn't about artificial intelligence; it's about artificial wisdom.

The market panic of 2025 is a warning shot. It’s a reminder that no amount of tracking or personalization can ever fully account for the beautiful, terrifying, and unpredictable chaos of the human heart. Our greatest challenge isn’t to build systems that can predict every click. It’s to build systems that can appreciate the complex, emotional, and often irrational human being who is doing the clicking.

That’s the next frontier. And it’s the one that truly matters.